The war in Iran has highlighted how the use of artificial intelligence (AI) in video production can influence public perception during peak periods of information consumption, as the countries involved in the conflict also seek to shape their own narrative.

But this phenomenon can have a very strong emotional impact on countries directly involved in the war, prompting their governments to take strict confinement measures.

The easy and inexpensive access to AI-based video technologies has flooded social networks with videos and photos manipulated by AI, showing battles, impacts on civilian areas, or statements since the beginning of the war in Iran, fueling misinformation that can have a significant influence on the perception of the war and the reality on the ground.

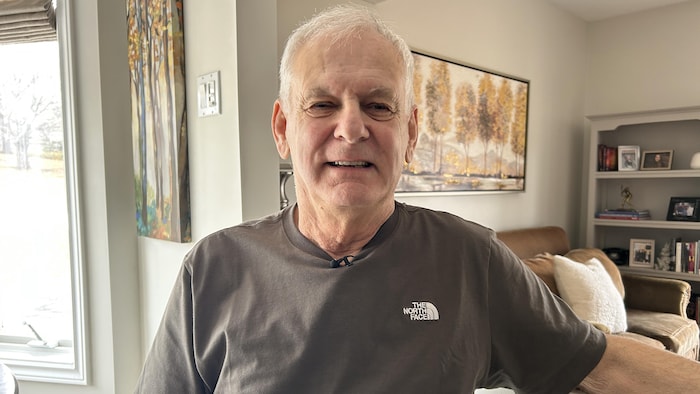

“Spectacular images and videos, purporting to show real-time scenes of combat and missile strikes, flood social media news feeds, spread rapidly, and deceive millions of people,” explains Marc Owen Jones, a Media Analysis professor at Northwestern University in Qatar, about how the war is unfolding online.

Jones, a specialist in social media influence, disinformation, and online politics, believes that social media has become a battleground for competing narratives in this conflict, with all parties and their supporters now seeking to win “hearts and minds” online.

From the American side, Jones mentions “videos interspersed with excerpts from Hollywood films, a sort of memeification of communication designed to appeal to a far-right aesthetic that rejects empathy in favor of humiliation.”

On the other hand, “Iran is rising in power, often mocking the United States with its memes, but many AI-generated images seem to exaggerate Iranian military successes, perhaps to increase pressure on Gulf countries to push for de-escalation,” he adds.

The advancements in artificial intelligence make disinformation easier to produce and more convincing. AI tools can be used by anyone to create high-quality videos, images, and audio recordings in seconds.

Among the examples are videos claiming to show the American aircraft carrier USS Abraham Lincoln on fire at sea, so convincing that President Trump claimed to have called his generals to verify their authenticity.

Trump later stated on his platform Truth Social that “Not only was it not burning, it wasn’t even targeted, Iran knows not to do that!”

Other examples include videos debunked as showing American soldiers in tears or destroyed buildings in Gulf cities.

“The use of AI is widespread and becoming increasingly difficult to detect,” notes Jones.

The speed at which content spreads online complicates verification for the general public.

“In a rapidly evolving conflict, verified information often arrives late, creating a void immediately filled by misinformation,” explains Jones. “When people are worried, they thirst for information, but this information is often false.”

Unverified contents can reach millions in minutes, leaving the public with the delicate task of verifying content that is often very realistic or spread across multiple platforms.

Parallel to combat images generated by AI, speculation spread widely last week claiming that Israeli Prime Minister Benjamin Netanyahu was dead.

“Some users spotted visual flaws in a low-quality video released on March 13 by Netanyahu’s office, claiming that Netanyahu appeared to have six fingers on one hand, a telltale sign of AI use,” Jones recounts.

Netanyahu then released several “proof of life” videos to silence the rumors. However, speculation about his death persists online.

Some content circulating online may be part of coordinated campaigns to divert attention, convince, or influence public opinion.

“We see suspicious and anonymous accounts, with histories of multiple name changes and no discernable identity, relaying false information and videos generated by AI,” explains Jones.

These accounts may seem credible but are often linked to state-backed actors or individuals seeking to profit from sensational content, he points out.

In some cases, automated accounts, or bots, amplify certain narratives by sharing and commenting on posts, creating the impression that they are more widely shared than they actually are.

Not all videos created by AI are meant to deceive. Some are intentionally designed as parodies or satires.

“These deepfakes generated by AI have crossed a critical threshold, the small flaws that allowed them to be detected have disappeared, and this technology is now accessible to anyone with a smartphone,” adds Jones.

Among the examples circulating online are a video portraying Trump as the new supreme leader of Iran, as well as clips showing Netanyahu as a malfunctioning robot or with multiple fingers.

In conflicts that evolve rapidly, this type of videos can detach from their initial context and spread very quickly.

The proliferation of misleading information online makes it increasingly challenging for the public to distinguish between truth and falsehood.

“False information can spread up to ten times faster than accurate information on social networks, and corrections are rarely seen or believed as much as the initial false claim,” observes Jones.

“Outrage drives sharing before fact-checking has time to take place, and that’s exactly what malicious actors are looking for,” he continues.

Jones believes that spectacular images should be approached with the same caution as unverified information.

“As the conflict continues, the battle also rages on social networks, leaving ordinary citizens to navigate a complex mix of misinformation, satire, and manipulated content.”